A single click in a dim room halfway across the world can set a city on fire. Not with a missile, but with a frame rate.

For months, a digital entity known as "Explosive Media" lived in the recommendations sidebar of millions. It didn't look like a traditional news agency. It didn't have the tired, gray aesthetic of state-run television or the frantic scrolling tickers of 24-hour cable news. Instead, it offered something far more seductive: the polished, uncanny shimmer of high-end AI animation.

It was a ghost in the machine, a pro-Iranian influence operation that leveraged the same technology used to make Pixar movies and video game cinematics to package the most volatile geopolitics on earth into bite-sized, viral entertainment. Then, with the suddenness of a power surge, YouTube pulled the plug. The channel is gone. The pixels have been wiped. But the silence it leaves behind is louder than the videos ever were.

The Puppet Master in the Code

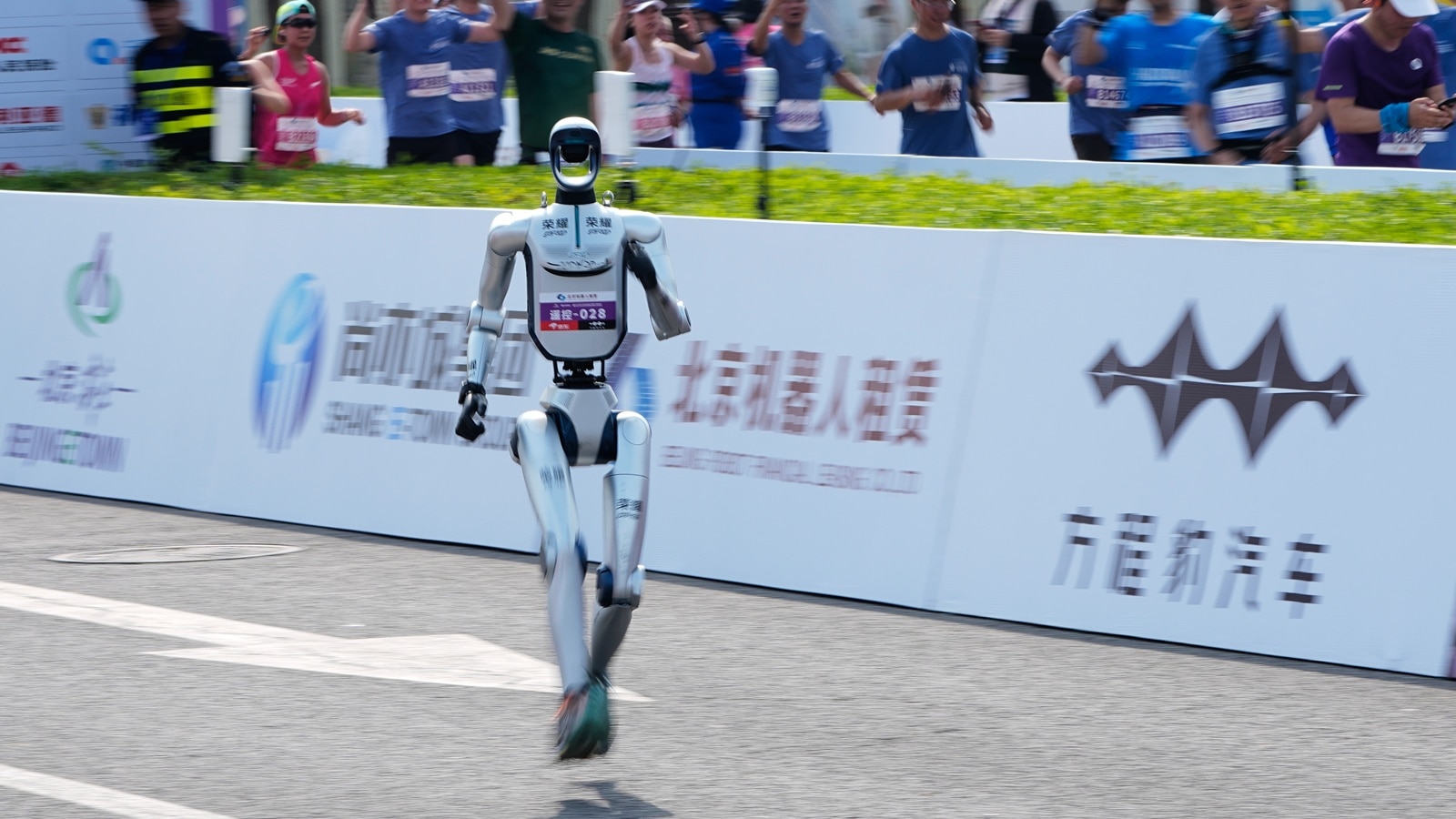

Imagine a young man in London or New York or Berlin. He is scrolling through his feed, eyes glazed, looking for a distraction. He finds a video titled with just enough curiosity to bait a click. Inside, the animation is fluid. The lighting is cinematic. It shows a stylized, heroic narrative of resistance and explosive retribution. There is no human face to hold accountable—just the perfect, unblinking eyes of an AI-generated soldier.

This wasn't just "content." It was a weaponized aesthetic.

Explosive Media wasn't interested in debate. It was interested in immersion. By using generative AI, the creators bypassed the need for expensive film crews, permits, or even real footage. They could manifest a reality where their side was always righteous and their enemies were always trembling, all rendered in 4K resolution. This is the new frontier of influence: the ability to manufacture a "truth" that looks better than the real thing.

The Jerusalem Post and various intelligence monitors tracked this digital trail back to Tehran’s sphere of influence. It was a sophisticated pipeline. High-concept scripts were fed into processors, which spat out animations designed to trigger the amygdala—the part of the brain that handles fear and rage. When we talk about "AI safety," we usually worry about robots taking our jobs. We should worry more about the robots taking our empathy.

The Invisible Stakes of a Deleted Channel

Why does a ban matter? If you cut off one head, two more grow in the comments section.

But the removal of Explosive Media is more than just a moderation victory; it is an admission of a terrifying vulnerability. YouTube’s decision to scrub the channel—along with its presence on other major platforms—highlights a desperate race between the speed of generation and the speed of detection.

The human eye is remarkably good at spotting a lie when it’s told by a person. We see the sweat, the shifty eyes, the tremor in the voice. We have evolved over millennia to detect deception in our peers. We have had no time to evolve a defense against a neural network. When a machine tells a lie, it does so with a steady hand. It doesn’t blink. It doesn’t feel the weight of the blood it might eventually spill.

Consider the hypothetical case of a teenager in a conflict zone. He sees these animations. They don’t look like "propaganda"—that’s a word for old posters and grainy speeches. These look like the games he plays. They speak his visual language. Every frame is a subtle nudge toward the idea that violence is not just necessary, but beautiful. That is the invisible stake. It isn't just about who wins an argument online; it’s about who controls the visual vocabulary of the next generation.

The Algorithm is an Accomplice

We like to think of platforms like YouTube as neutral libraries. They aren't. They are engines of engagement. The algorithm doesn't know that Explosive Media is a state-linked influence operation. It only knows that people are watching. It knows that the "watch time" is high because the animations are captivating. It knows that the "share" rate is peaking because the content is polarizing.

The machine was helping the machine.

The AI that created the videos found a perfect partner in the AI that distributed them. It was a closed loop of radicalization. The more a user watched, the more the algorithm fed them similar, AI-enhanced narratives. It created a digital hall of mirrors where the only thing reflecting back was a meticulously crafted version of hate, rendered with the soft glow of a virtual sunset.

The facts are stark: YouTube removed the channel for violating its policies on "coordinated inauthentic behavior." That is a clinical term for a very human problem. "Coordinated inauthentic behavior" means someone is pretending to be a community when they are actually a government. It means the "grassroots" support you see in the comments is actually a server farm.

The Human Element in a World of Ghosts

Behind every AI animation is a human intent. This is the truth we often forget. We get so caught up in the "how"—the diffusion models, the rendering engines, the prompt engineering—that we lose sight of the "why."

Someone sat at a desk. Someone chose the colors. Someone decided that a specific explosion should look "heroic" rather than tragic. The technology is new, but the impulse is as old as the first campfire story meant to stir a tribe to war. The difference is the scale. A story told by a fire reaches fifty people. A story told by an AI and amplified by an algorithm reaches fifty million.

We are living in an era of "Deep Doubt." It isn't just that we can't believe what we see; it's that we are beginning to doubt our own reactions. When you realize that the video which moved you to tears or made your blood boil was generated by a script and a GPU, something breaks inside the social contract. Trust is a non-renewable resource. Once it’s gone, you can’t render it back into existence.

The Friction of Reality

The ban of Explosive Media creates a temporary friction. It forces the propagandists to find new channels, new handles, and new ways to bypass the filters. It buys us a little bit of time.

But time is exactly what we are losing. The gap between a fake video and a real one is closing to the point of insignificance. We are moving toward a world where "proof" is a legacy concept. Soon, every video of a battlefield, every speech by a leader, and every "leak" from a war room will be viewed through the lens of suspicion.

Is it real? Or is it Explosive Media 2.0?

This skepticism, while necessary, is its own kind of prison. If we can't believe anything, we eventually stop caring about everything. That is the ultimate goal of these operations. It’s not just to make you believe a specific lie; it’s to make you give up on the idea that truth exists at all.

A Ghost in the Feed

The channel is gone, but the templates remain. The prompts are saved. The models are getting smarter.

The removal of one Iranian-backed channel is a tactical win in a strategic nightmare. It reminds us that the digital world is not a separate realm. It is a direct extension of the physical one. The animations created by "Explosive Media" weren't just harmless cartoons; they were blueprints for a future where war is marketed like a blockbuster film, and where the audience doesn't even know they've been cast as the extras.

As you scroll tonight, look closer at the edges of the frames. Look for the slight shimmer where the AI struggled to define a hand or a shadow. But don't just look for the technical flaws. Look for the emotional ones. Ask why a video wants you to feel a certain way. Ask who benefits from your anger.

The pixels may be silent for now, but the code is still running, waiting for the next click to bring the ghosts back to life.

The screen goes black. The reflection you see in the glass is the only thing left that is truly real.

Protect it.